GDG Hanoi photo gallery

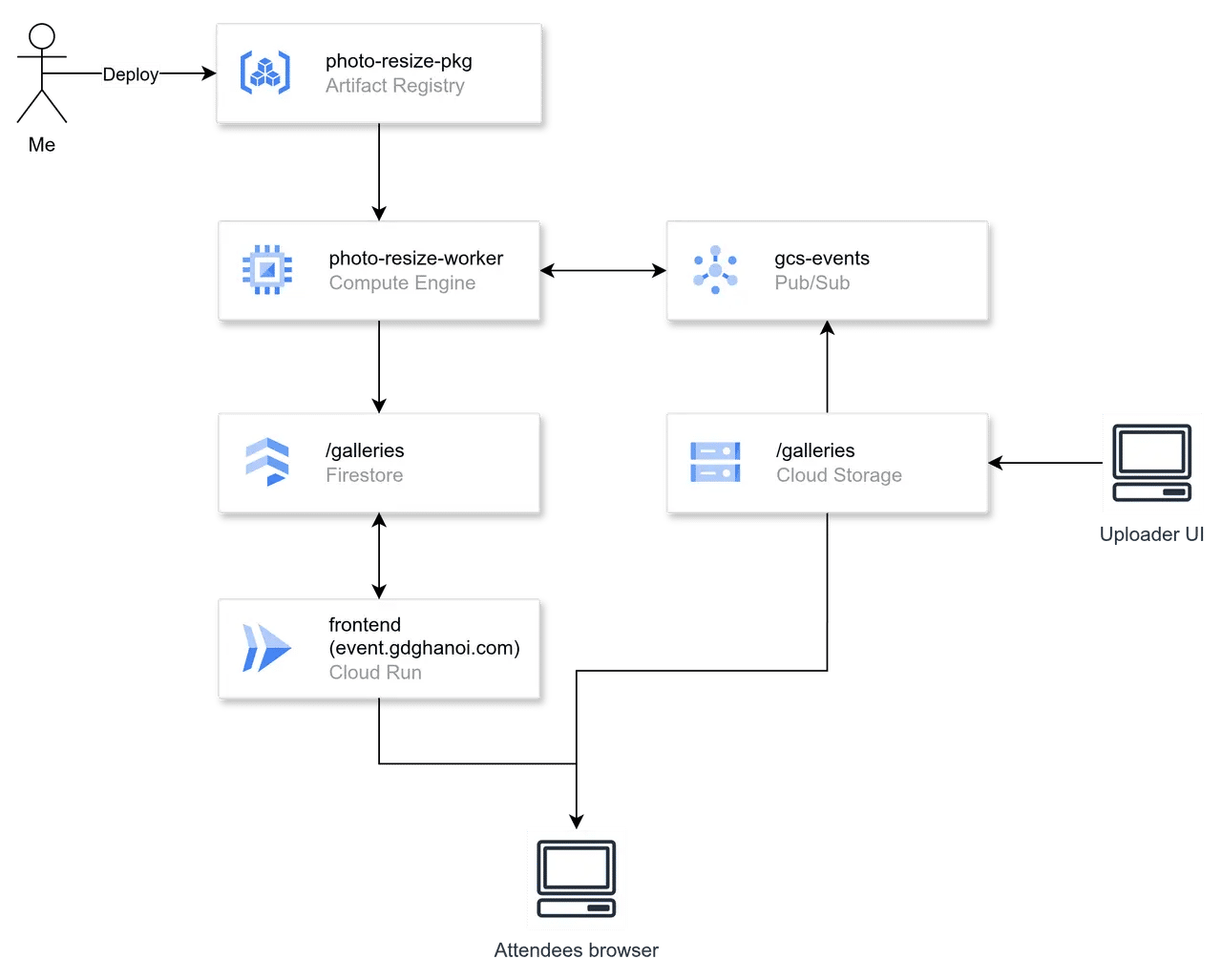

High-level design components

This post is a part of a series of posts about my design process for a photo gallery service for GDG Hanoi. You can find the introduction post here: Introduction.

What I’ve already had

Let’s start with listing the existing resources before jumping into what components I should use:

- 1 existing frontend site: gdghanoi.com hosting on Google Cloud Run.

- Approximately 800 USD in Google Cloud credits, which will expire in August of this year.

I pick Google Cloud as the primary cloud provider for other components of the project.

For the region, the service mostly serves clients in Vietnam, so single region deployments is enough. Based on the Google Cloud latency dashboard, I choose Taiwan (asia-east1) as the primary region of the service to minimize the latency to Vietnam.

By deploying components in the same region, I can ensure the communication between them experiences near-zero latency. It also helps to reduce the risk of cross-region latency and cross-region bandwidth cost. It is important because resizing photos may require a high bandwidth of data transfer between storage and workers.

Back-of-the-envelope metrics

It is obvious that the primary entity of the service is a photo.

Assuming that:

- An original photo can be up to 10MB, 6000x4000 pixels, 240 pixels/inch, and in general formats (PNG or JPEG).

- The WebP format can reduce up to 85% of the file size.

I will resize and format it into 9 WebP versions: 320, 512, 768, 1024, 1280, 1600, 2048, 2560, and original size.

Let me note down some metrics that I have in mind about it.

| Metrics (per event) | Values |

|---|---|

| Maximum size of an original photo | 10MB |

| Maximum dimension of a photo (3:2) | 6000x4000 pixels |

| 1 photo (WebP, orginal width) | 1.5MB |

| 1 photo (WebP, width 320px) | 80KB |

| 1 photo (WebP, width 512px) | 128KB |

| 1 photo (WebP, width 768px) | 192KB |

| 1 photo (WebP, width 1024px) | 256KB |

| 1 photo (WebP, width 1280px) | 320KB |

| 1 photo (WebP, width 1600px) | 400KB |

| 1 photo (WebP, width 2048px) | 512KB |

| 1 photo (WebP, width 2560px) | 640KB |

| Total size of a photo entity | 14.028MB |

| Average amount of photo entities | 1000 entities |

| Total storage required | 14.028GB |

| Maximum page visits | 30000 visits |

| Amount of photo fetched per page | 200 photos |

| Bandwidth per page (assuming only fetch 1024px photos) | 51.2MB |

| Total bandwidth transferred out to Internet | 1.54TB |

Building blocks

Storage

I need blob storage to store the photos. Here, I choose to use Google Cloud Storage (GCS), which provides me utilities:

- Public URLs for serving photos, as long as I choose to open public access to the bucket.

- Notification subscription for each file-uploaded event. This feature helps simplify the image processing trigger.

- Its free tier is generous enough for the number of GDG Hanoi photos.

Database

The database is where I store photos’ metadata, including object IDs, event labels, public URLs, upload timestamps, etc.

I choose to use Google Cloud Firestore (Firebase Firestore). The reasons are:

- Its design is optimized to read-heavy applications.

- I can get an (almost) free database with the most sufficient performance for this small-scale

project, such as:

- It is a serverless document database, so I don’t have to deploy it on a dedicated machine.

- Its architecture is built on top of GCP, so it takes advantage of the network to provide access with minimal latency for the GDG Hanoi’s web, which is hosted on GCP already.

- It also provides backup and recovery features and auto-scales horizontally, so I don’t have to worry about the database scalability and availability.

The only drawback that I can come up with is that Firestore does not support offset-based pagination queries, so I have to implement the schema more manually to support the paging mode properly. I will dive deeper into the schema in the next post.

Photo worker

This worker is essential to the responsive image capability of the gallery.

Because the logic may be varied, I will write dedicated software to perform this specific task.

The major process is image resizing, which is compute-intensive. Currently, the actual resource consumption for me is unknown. Thus, I choose to containerize it and deploy on a computing instance so that I can customize and evaluate the resource usage easily.

I may try concurrent processing to optimize the image processing time, but it requires a clear estimation of computing resource capability. Btw, Golang concurrency sounds promising.

Message queue

Under my observation, for each new photo uploaded to GCS, the worker has to acknowledge the event so it can start downloading the newly uploaded file and create various sizes of it.

Therefore, I have to make the worker “listen” to the GCS events. As a consequence, I have to set up a Pub/Sub queue to play the role of a communication channel between GCS and the worker.

Uploader frontend

The media team needs a web interface to upload photos in batches. I need to provide a dedicated web interface to let them:

- Specify an event to upload.

- Choose multiple photos to upload at once.

- View the status of the upload.

- Reflect the photo lists with the gallery pages.

Btw, I set this component as the lowest priority to implement so that I ought to prepare some apologies to media members if there is anything about the site that does not follow the design lol.

Gallery backend & frontend

The GDG Hanoi website is built on Next.js; therefore, I’ve already had a server that performs 2 tasks:

- Serve the static pages & server-rendered React components.

- Serve some simple HTTP REST API routes.

I will let the web connect to Firestore directly on the server side and deliver to the client side the gallery pages and open APIs for fetching photos. That will minimize the required efforts to implement the gallery pages.

I did think about if I needed a CDN to serve the photos, and then I decided not to use it, because:

- The GCS bucket is set to be publicly accessible; I can use its public URLs to serve the photos directly.

- The service at this moment is primarily intended for the clients in Vietnam; the CDN won’t give any benefit because Taiwan is one of the closest points of presence to Vietnam.

- Even when the CDN distributes photos to other PoPs surrounding Vietnam, such as Singapore, Malaysia, or Hong Kong, it seems like there will be no latency difference compared to serving from Taiwan.

- Yeah, it is not free.