GDG Hanoi photo gallery

Photo processing designs

This post is a part of a series of posts about my design process for a photo gallery service for GDG Hanoi. You can find the introduction post here: Introduction.

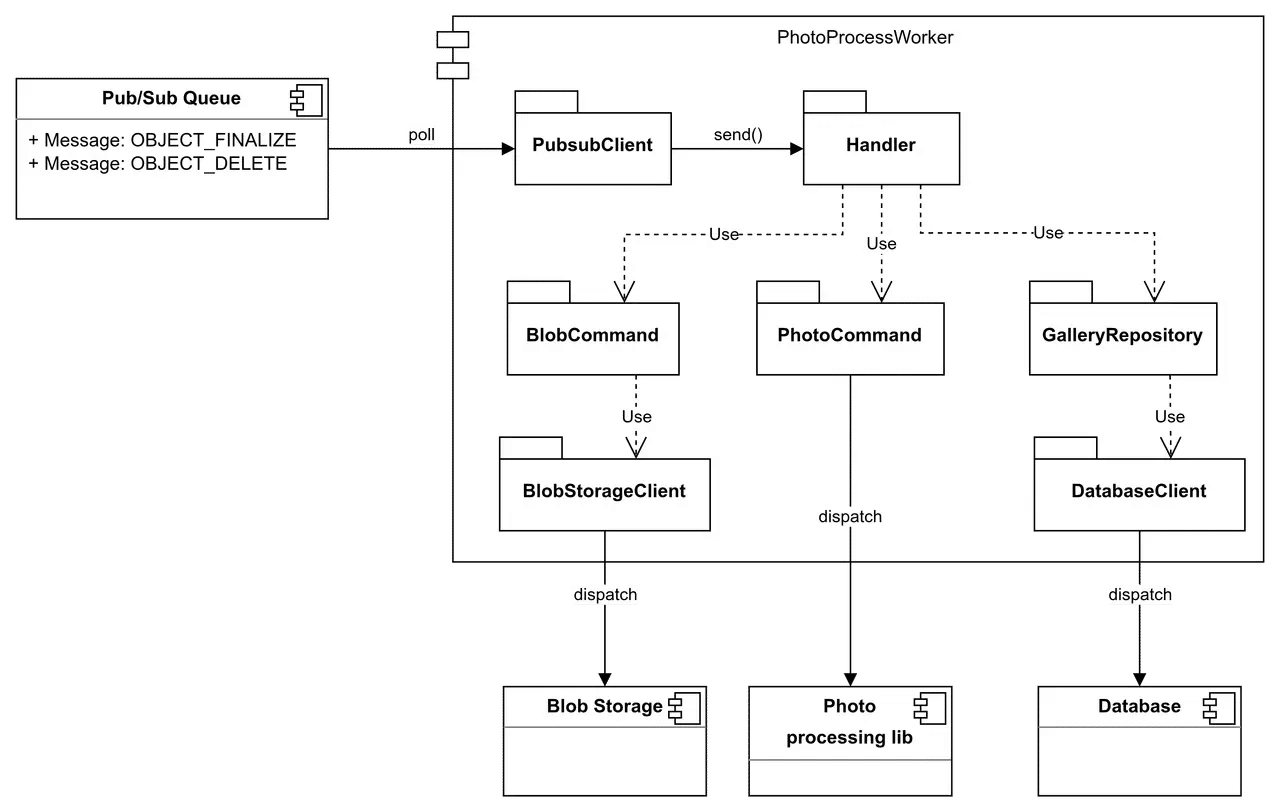

This is the critical component of our gallery service, which peforms write operations for a photo entity to the gallery’s database and storage. It is the only component I had to build from scratch.

In this post, I would like to share how I design and build it.

Functionalities

For the triggered endpoint, the worker plays the role of a consumer in the system pub/sub pattern. Its source code provides a client that pulls messages from the remote message queue and forwards them to handler methods.

For registering a photo entity, the worker has to:

- Download the original photo from the upload storage bucket.

- Resize the photo to various versions and formats.

- Upload the resized copies back to the bucket.

- Construct a photo entity record and insert it into the photo database.

For deregistering a photo entity, it has to:

- Extract the original object key and transform it to the corresponding derived object keys.

- Delete all copies of the photo from the storage according to the derived object keys, as well as the original one.

- Be able to skip the deletion of the resized photos, because they have already been removed on the original event.

- Remove the photo entity record from the database.

Implementation drafts

Sketch diagram

Programming language

I choose Golang; besides my interest in trying this programming language, it also provides many great utilities:

- Performance in compute-intensive operations.

- Small (optimized) memory footprint.

- Concurrency friendly and simple to implement via its goroutines and channels.

- Simple CLI tools and package orchestration.

- Strict data types and data safety builds.

- Complete support of standard libraries, including file handling, logging, JSON data serialization, etc.

- Complete support of cloud client libraries.

Image processing library

I choose libvips, which offers me low execution time and low memory usage for image operations.

Concurrency keynotes

By choosing Golang, I would like to deprecate the whole concurrency channel orchestration to its goroutines on the application level.

It’s worth mentioning here because libvips also offers its own concurrency model, so I have to keep it in mind to ensure the library does not multi-thread internally to prevent CPU thrashing.

Another aspect that is significant to consider is managing the concurrencies in message pulling. We can utilize pulling messages to multi-channel depending on the number of CPU threads that are available in the underlying compute instance.

Processing metrics estimation

Memory consumption

I will calculate the maximum memory consumption per execution task (concurrent goroutine) based on this formula:

Where:

- : The memory required for Go runtime, assuming it is around 20MB.

- : The memory required for cloud storage client, assuming it is around 5MB.

- : The memory required for libvips overhead. By default, libvips use Random Access mode to open image. It means it may allocate the threshold memory (100MB by default) and load the image into the memory when processing.

- : The safe buffer ratio to account for unexpected memory usage. I choose 20% (0.2) for this.

Therefore, the maximum memory consumption per execution task is:

Having the memory-consuming estimation, I can sketch a table of memory estimation for the hosting machine:

| Items | Units | Quantities |

|---|---|---|

| RAM per concurrent goroutine | MB | 150 |

| Max concurrent tasks per 8GBs pool | tasks | 53 |

| Max concurrent tasks per 2GBs pool | tasks | 13 |

| Concurrency assumption | channels | 16 |

| RAM reservation by concurrency assumption | MB | 2400 |

Execution time

I did an experiment to measure the execution time of a single task on my local machine (Intel(R) Core(TM) i7-14700F CPU @ 5.40GHz, 28 threads).

#!/bin/bash

hyperfine -w 3 \

"time vipsthumbnail test.jpg -o test.webp -s 320" \

"time vipsthumbnail test.jpg -o test.webp -s 512" \

"time vipsthumbnail test.jpg -o test.webp -s 768" \

"time vipsthumbnail test.jpg -o test.webp -s 1024" \

"time vipsthumbnail test.jpg -o test.webp -s 1280" \

"time vipsthumbnail test.jpg -o test.webp -s 1600" \

"time vipsthumbnail test.jpg -o test.webp -s 2048" \

"time vipsthumbnail test.jpg -o test.webp -s 2560" \

"time vips webpsave test.jpg test.webp"It gave me the following mean metrics:

| Metrics | Values (approximately) |

|---|---|

| Photo size | 6MB |

| Resized photo total size | 2.417MB |

| Download time (235 Mbps) | 204ms |

| Upload time (16 Mbps) | 1209ms |

| Resize time (320px) | 150ms |

| Resize time (512px) | 175ms |

| Resize time (768px) | 240ms |

| Resize time (1024px) | 270ms |

| Resize time (1280px) | 360ms |

| Resize time (1600px) | 400ms |

| Resize time (2048px) | 430ms |

| Resize time (2560px) | 500ms |

| Convert time (original) | 850ms |

| Total | 4788ms |

Deployment

Following up on the estimation, now I can make a decision on designing a runner capacity for the photo processing worker.

As quoted from the high-level design, I consider deploying on Google Cloud services only. Up to this moment, there are two options that appear in my mind:

- Deploying on GCE instances

- Deploying on Cloud Run functions (formerly known as Cloud Functions)

Deploying on GCE instances

The event day is usually a one-day event, so it is fair enough to assume that we just need to keep the worker alive for 24 hours.

Therefore, I can consider cherry-picking a high-end instance to host the worker.

In the Build with AI Hanoi 2026 event day, I chose a c2d-standard-4 spot instance to deploy the worker. This instance features:

- C2D machine series, which are best-suited for high-performance computing.

- 4 vCPUs that I can set up to 4 message polling channels concurrently.

- 16GB RAM.

- An AMD processor that is cheaper on average than Intel’s CPUs.

- AVX2 instruction set implementation that is robust enough for standard image processing tasks.

- Around 4 USD for running for 24 hours as a spot instance.

Why that much of RAM?

- There are a lot of GCP credits left.

- I want to try a hack that mounts the libvips’ temporary directory to the RAM, so it can boost up the performance rapidly when performing file handling tasks directly on memory instead of disk.

For c2d-standard-4 instance, I can calculate the image throughput per hour as:

Where:

- : The image throughput of a machine.

- : Processing time of one task.

- : The number of vCPUs of the machine.

Deploying on Cloud Run functions

The GCE approach is not really a good fit for long-term runs due to its cost, especially applying to the current usage & traffic amount. Lol, I’m still not that rich to throw money away easily -_-.

In the future, when the GCP credits expire, I will consider moving to the Cloud Run functions, which only charge mostly on CPU time consumption. I’m not gonna confirm it’s a dramatically cheaper choice, but it potentially is.

By setting up the functions, I can utilize:

- Easier automatic horizontal scaling.

- Smaller capacity per instance, but it still can work well with Golang concurrency.

At this time, I have not tried this approach in practices, so I won’t give any more details about the technique specifications.